Publishing something online has never been easier. A photograph, a piece of writing, a report, even a simple LinkedIn post, content moves quickly, often reaching audiences far beyond its original context.

But that circulation comes with a trade-off. It is not uncommon to come across your own work elsewhere, reposted, reused, or sometimes altered, without clear attribution or acknowledgment of its origin.

For creators, journalists, photographers, and anyone producing content, this raises a fundamental question:

What really happens to what we publish once it enters the digital ecosystem?

When content circulates without its context

Most digital platforms were not originally designed to keep track of where content comes from or how it should be used. When creating an account, users agree to terms and conditions they rarely read, often years before artificial intelligence became part of everyday digital practices.

Since then, the environment has evolved significantly.

Content is no longer simply shared; it is analysed, repurposed, and in many cases used to train AI systems. Yet the information that gives it meaning: its origin, its author, and the conditions under which it may be reused, is often lost as it circulates.

As a result, images are downloaded and reposted, texts are reused without attribution, and ideas circulate far beyond their original context, often detached from those who created them and what is available online is frequently treated as if it were free to use, even when it is not.

As the origin of creative work becomes less visible and its use remains uncontrolled, the conditions that sustain human creation begin to weaken. Without clear visibility or control, creators are less inclined to publish, share, and contribute.

A different approach to publishing and protection

Within the TEMS (Trusted European Media Data Space) initiative, new approaches are being implemented to address this gap. Among them, Trial 7, operated by Panodyssey, focuses on making intellectual property visible, traceable, and usable in digital environments.

This tool consists in making existing rights explicit, structured, and understandable, both for humans and for machines. This begins at the moment a work is published, by ensuring that content, metadata, and rights information remain inseparable.

On Panodyssey, a publication is not just a piece of content. It is structured from the outset with metadata that identifies its author, its origin, and its context, while also defining the conditions under which it may be reused.

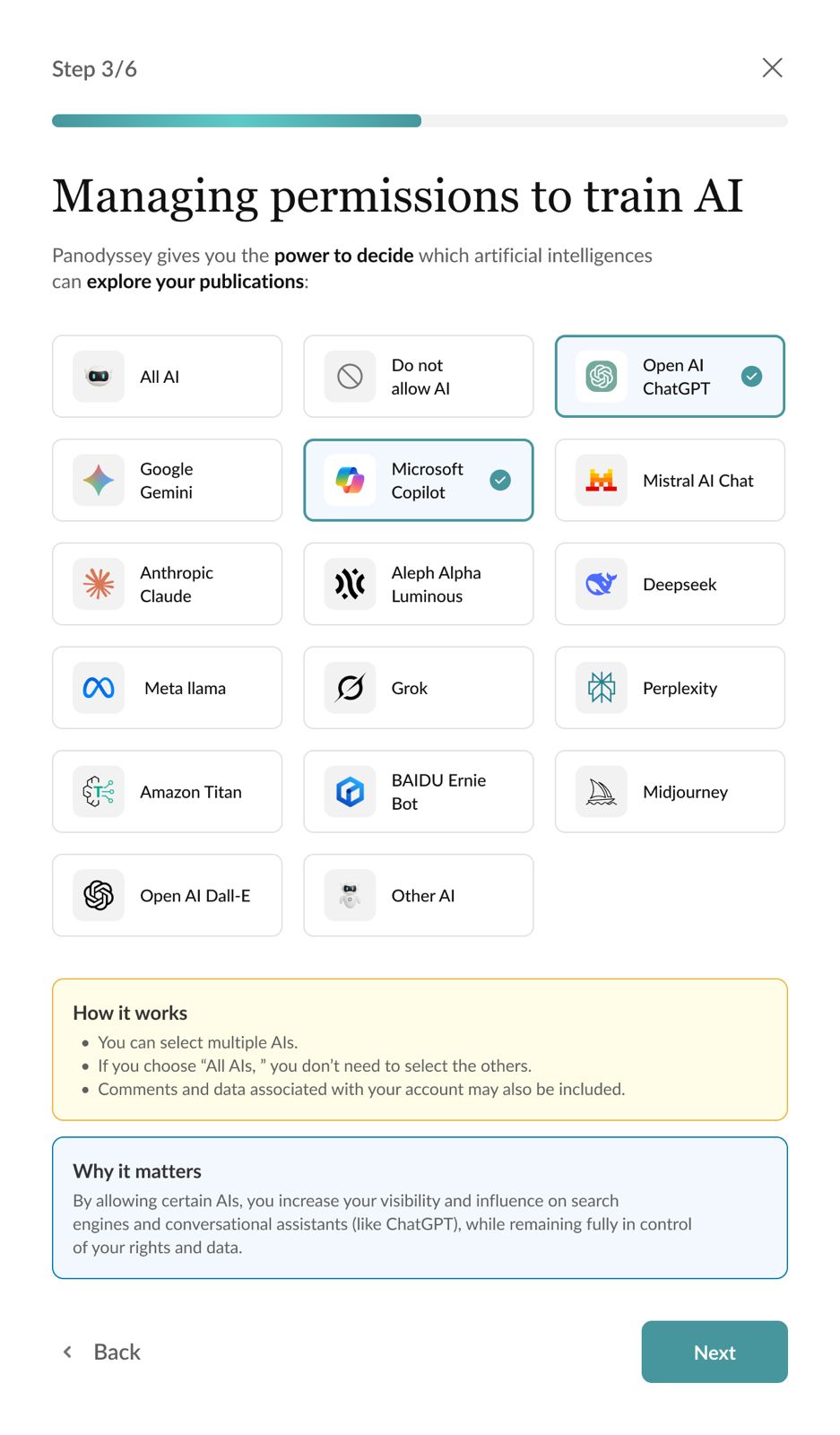

This approach is reinforced by the AI Transparency Notice, developed by Panodyssey in collaboration with Agencia EFE. Through this mechanism, creators can indicate how their work was produced, whether it is human-made, generated by artificial intelligence, or co-created with AI tools, and specify whether it may be used or not for training AI systems.

This second dimension is essential in the context of European copyright law. Under the Directive (EU) 2019/790, rights holders have the possibility to oppose the use of their works for text and data mining. For this opposition to be effective, it must be expressed in a way that can be recognised by machines.

By attaching structured, machine-readable signals to each publication, Panodyssey makes it possible to express this opt-out in practice. The information becomes understandable and interpretable by platforms and AI systems, allowing them to identify whether a content may or may not be used for AI training, in line with emerging standards such as TDMRep.

This approach is complemented by account certification for both individuals and organisations. By linking content to verified identities, it becomes easier to trace the origin of works and establish accountability across the platform. Rights information can also be updated or adjusted, allowing creators and publishers to refine the conditions of use as their needs evolve.

What emerges is a different model of publication, one in which content is no longer separated from the information that defines it, but instead carries its origin, its authorship, and its conditions of use wherever it goes.

Aligning with European regulatory frameworks

This approach aligns with a broader shift in European regulation, where transparency and control over the use of content are becoming central.

With the AI Act, new expectations are emerging around the transparency of data used to train artificial intelligence systems. At the same time, existing copyright rules, notably the Directive (EU) 2019/790 already provide a legal basis for rights holders to oppose the use of their works for text and data mining.

This direction is also reflected in recent discussions at the European level. A report presented by Axel Voss, Member of the European Parliament, highlights the need for greater transparency in the use of protected works by AI systems, as well as effective mechanisms for rights holders to retain control.

A building block within the TEMS ecosystem

The work carried out in Trial 7 shows how intellectual property can be made visible and operational in digital environments.

What emerges is a different model of publication, one in which content is no longer separated from the information that defines it, but continues to carry its origin, its authorship, and its conditions of use as it circulates.

Within TEMS, these mechanisms form part of a broader effort to build a shared European data space where media content can move more reliably, with its context and rights intact. For media organisations, broadcasters, publishers, and cultural institutions, this opens up new possibilities: content can be shared, reused, and monetised with greater clarity, while creators retain visibility and control over their work.

But beyond infrastructure and regulation, creative work depends on individuals who write, compose, document, and create, often driven by talent, time, and personal investment. When the conditions under which this work is shared become unclear or uncontrolled, the incentive to publish and contribute begins to weaken.

Ensuring that content remains traceable, attributed, and used under clear conditions is not only a technical or legal matter. It is also a way of preserving the place of human creation in digital environments increasingly shaped by artificial intelligence.